As artificial intelligence (AI) continues to permeate industries ranging from healthcare to finance, the ethical risks associated with autonomous decision-making are becoming harder to ignore. While automation offers speed and efficiency, unchecked AI systems can lead to serious unintended consequences—from biased decisions to privacy violations. This is why every AI system needs a human-in-the-loop (HITL) for ethical safety.

The Role of Human-in-the-Loop AI

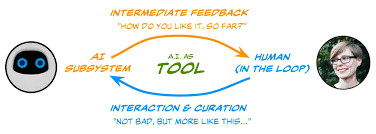

Human-in-the-loop AI refers to systems where humans remain actively involved in the decision-making process, providing oversight, judgment, or intervention. Rather than replacing human agency, these systems are designed to augment and align machine intelligence with human values.

HITL systems are essential for ensuring ethical automation, especially in high-stakes environments like autonomous driving, predictive policing, and medical diagnostics. These applications require not only technical accuracy but also moral reasoning—something that AI, in its current state, cannot reliably provide.

Ethical Automation Isn’t Just a Technical Challenge

It’s easy to assume that improving the accuracy or transparency of an AI model will solve ethical problems. But ethical automation goes beyond the algorithm. It involves ongoing processes of reflection, accountability, and context-sensitive judgment.

For instance, in a loan approval system, AI might process applications faster, but it takes a human to assess whether the training data reflects systemic biases, or whether a marginal case deserves deeper review. This blend of machine efficiency with human judgment is where HITL AI becomes indispensable.

The Carlo Ethics Loop: A Framework for Responsible AI

One promising model for ensuring ethical AI development is the Carlo ethics loop, a feedback framework that incorporates ethical reflection at every stage of the AI lifecycle. Named after ethicist Carlo V. Bellini, the loop emphasizes iterative evaluation involving multiple stakeholders—designers, users, regulators, and ethicists.

The Carlo ethics loop advocates for:

Continuous human feedback to refine model behavior.

Scenario testing to explore ethical dilemmas.

Escalation mechanisms when AI decisions approach ethical boundaries.

By embedding this loop into AI workflows, organizations can maintain a living dialogue between machine behavior and human values, keeping ethical concerns at the forefront.

When Autonomy Meets Accountability

Perhaps the most compelling reason for human-in-the-loop AI is accountability. Who is responsible when an autonomous vehicle makes a fatal mistake? Or when an algorithm denies healthcare coverage based on flawed logic? Without human oversight, we risk creating ethical vacuums where blame becomes as opaque as the algorithms themselves.

HITL systems restore a crucial link between action and responsibility. By keeping humans involved, especially at critical junctures, we ensure that automation doesn’t become a moral blind spot.

Conclusion

As AI systems grow more capable and complex, the need for ethical automation grows more urgent. Incorporating human-in-the-loop AI and embracing frameworks like the Carlo ethics loop are not optional add-ons—they are foundational safeguards for building trustworthy, responsible, and humane AI.

Without a human in the loop, we risk losing control not only over the technology itself but over the ethical principles that should guide its use. In a world increasingly shaped by algorithms, keeping humans at the center is not just wise—it’s essential.