As artificial intelligence (AI) becomes an integral part of industries from healthcare to finance, conversations around ethical AI and compliance are more urgent than ever. However, widespread misunderstanding continues to cloud these topics. This article tackles the top 7 misconceptions—AI myths and compliance misconceptions alike—that often derail progress in responsible AI adoption. We also spotlight how Carlo PEaaS awareness is helping organizations navigate this complex landscape.

- Ethical AI Is Just About Avoiding Bias

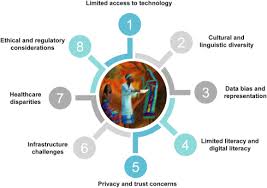

One of the most persistent AI myths is that ethical AI only concerns bias mitigation. While addressing bias is essential, ethical AI encompasses much more: transparency, explainability, data privacy, accountability, and sustainability. Ethical practices must span the entire AI lifecycle—from data collection to deployment and monitoring. - Regulatory Compliance Equals Ethical AI

Here’s a major compliance misconception: that being legally compliant automatically means your AI is ethical. Regulations often provide a baseline, but ethics go further. An AI system can be technically compliant yet still harm vulnerable groups. Ethics should proactively address potential negative consequences, not just react to legal mandates. - Small Companies Don’t Need to Worry About Compliance

Another dangerous assumption is that only tech giants or large enterprises need to focus on AI compliance. In reality, startups and SMEs are just as responsible for ensuring ethical practices. In fact, early investment in compliance frameworks can help build long-term trust and avoid future legal pitfalls. - Ethical AI Slows Down Innovation

Many believe that implementing ethical AI frameworks creates red tape that hinders innovation. In fact, the opposite is true. Ethical and compliant AI builds trust with users, partners, and regulators—ultimately driving adoption and long-term success. Ethical frameworks also reduce the risk of reputational damage, fines, and system recalls. - Once an AI System Is Compliant, It Stays Compliant

Compliance is not a one-and-done process. As AI systems evolve through learning or updates, their compliance status can change. Ongoing monitoring and risk assessment are crucial. This is where Carlo PEaaS (Policy Enforcement-as-a-Service) awareness comes into play, offering dynamic tools for real-time compliance management across diverse AI environments. - You Can Outsource Ethics to Third-Party Vendors

Outsourcing AI development doesn’t absolve an organization of its ethical and legal responsibilities. Businesses must ensure that vendors adhere to the same ethical and compliance standards. Carlo PEaaS awareness helps organizations evaluate third-party models and services, maintaining accountability across the supply chain. - Open Source AI Is Automatically Ethical

Open source does not mean open accountability. While transparency is a step in the right direction, ethical AI also requires governance structures, usage policies, and community oversight. Relying on open-source tools without due diligence can lead to compliance blind spots.

Final Thoughts

Understanding the difference between AI myths and reality is crucial for organizations aiming to deploy responsible technology. Ethical AI and regulatory compliance are not just legal checkboxes—they are strategic imperatives. By fostering Carlo PEaaS awareness, companies can implement agile, enforceable, and proactive AI governance frameworks that align with both ethical standards and evolving regulations.

If your organization is still navigating the early stages of ethical AI adoption, it’s time to move beyond misconceptions and embrace an informed, action-oriented approach.